Load Balancing PHP Services with Gruxi's Reverse Proxy

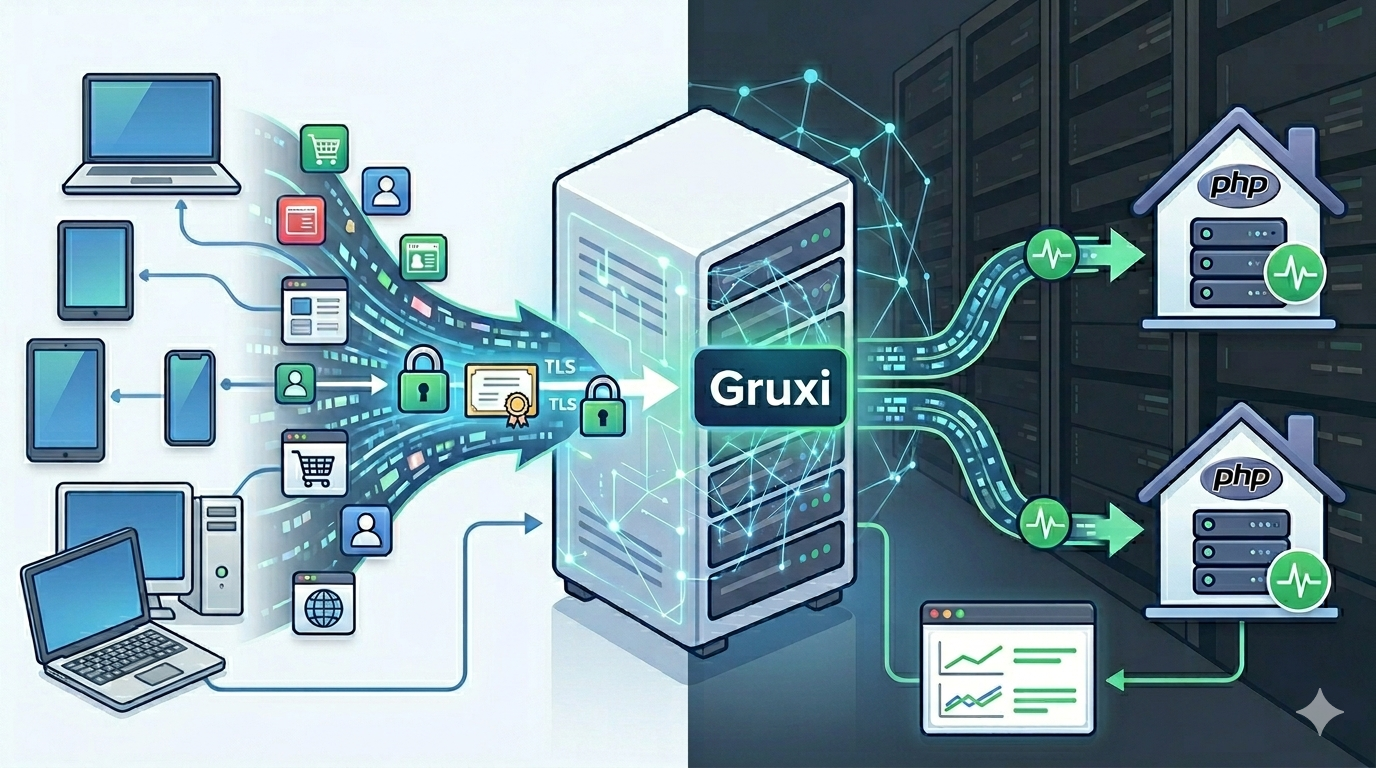

When a PHP application grows beyond a single process or single container, the next operational step is usually some form of reverse proxy and load balancing. That often means adding another moving part just to distribute traffic, terminate TLS, and monitor which backend is healthy. Gruxi can handle that layer itself.

This guide walks through a practical setup where Gruxi sits in front of two PHP service instances and distributes traffic between them with built-in health checks. The backends expose HTTP, while Gruxi handles the reverse proxy role, TLS offloading, and monitoring from one place.

If you are searching for reverse proxy PHP, load balancing PHP, or a Gruxi load balancer example for PHP services, this is the quickest way to see how the feature works in practice.

Scenario: two PHP service instances behind one entry point

Assume you have a small PHP API or application that you want to run as more than one instance. A common example is two containers serving the same codebase so you can spread traffic, restart one instance without taking the whole service down, or prepare for a larger multi-node setup later.

At that point you usually want one public endpoint in front of both backends:

- clients connect to one hostname

- Gruxi accepts HTTP or HTTPS traffic

- Gruxi forwards requests to multiple backend services

- unhealthy backends are removed from rotation automatically

The important detail is that Gruxi's reverse proxy load balancing works with HTTP upstreams. In other words, Gruxi balances PHP services that already expose HTTP, such as PHP apps running in containers behind a small app server or PHP's built-in development server for a lab setup.

The request flow looks like this:

Client

|

v

Gruxi (:80 / :443 / :8000 admin)

|

+--> php-app-1:8080

|

+--> php-app-2:8080This keeps the public edge simple while letting you scale the PHP service horizontally.

Why use Gruxi as the reverse proxy layer

Gruxi's proxy processor gives you the features you normally expect from a separate reverse proxy tier:

- reverse proxying to HTTP and HTTPS backends

- load balancing across multiple upstream servers

- built-in health checks

- TLS termination at the edge

- monitoring through the admin portal and metrics support

For a PHP deployment, that means you can let the backend containers focus on the application while Gruxi handles the concerns that sit at the front door.

That is especially useful if you want one server product to cover several roles at once:

- terminate TLS for the public site

- distribute traffic with round robin balancing

- stop sending traffic to a failed backend

- inspect health and request activity without adding another dashboard immediately

If you are already using Gruxi for PHP handling elsewhere, this is also a straightforward way to use it as a PHP reverse proxy for service-style applications.

Example setup: Gruxi with two PHP backends

The simplest demo is a docker-compose.yml file with one Gruxi container and two identical PHP app containers.

services:

gruxi:

image: ghcr.io/daevtech/gruxi:latest

depends_on:

- php-app-1

- php-app-2

ports:

- "80:80"

- "443:443"

- "8000:8000"

volumes:

- ./gruxi/db:/app/db

- ./gruxi/logs:/app/logs

- ./gruxi/certs:/app/certs

restart: unless-stopped

networks:

- appnet

php-app-1:

image: php:8.3-cli

working_dir: /app

command: sh -c "php -S 0.0.0.0:8080 -t public"

volumes:

- ./app:/app

restart: unless-stopped

networks:

- appnet

php-app-2:

image: php:8.3-cli

working_dir: /app

command: sh -c "php -S 0.0.0.0:8080 -t public"

volumes:

- ./app:/app

restart: unless-stopped

networks:

- appnet

networks:

appnet:

driver: bridgeAdd a minimal application in app/public/index.php that shows which backend handled the request:

<?php

declare(strict_types=1);

$hostname = gethostname() ?: 'unknown-backend';

header('Content-Type: text/plain; charset=utf-8');

echo "Hello from PHP behind Gruxi\n";

echo "Served by: {$hostname}\n";For health checks, add app/public/healthz.php:

<?php

declare(strict_types=1);

http_response_code(200);

header('Content-Type: text/plain; charset=utf-8');

echo "ok\n";Start everything with:

docker compose up -dThen open the Gruxi admin portal at https://localhost:8000 and configure a site with a Proxy Processor.

Use settings like these:

Name:php-service-lbURL Match Patterns:*Proxy Type:HTTPLoad Balancing Strategy:Round RobinUpstream Servers:http://php-app-1:8080,http://php-app-2:8080Health Check Path:/healthz.phpHealth Check Interval:10Health Check Timeout:3Timeout:30

At that point, Gruxi becomes the public entry point and the PHP containers stay private on the internal Docker network.

If you want HTTPS at the edge, configure the site binding and certificate in Gruxi as usual. TLS terminates at Gruxi, and the proxy forwards traffic to the backend services afterward.

Testing that traffic is balanced

Once the proxy processor is saved and the configuration is reloaded, open the site through Gruxi, for example at http://localhost.

Refresh the page several times. Because the sample app prints the container hostname, you should see requests alternate between the two backends over time.

You can also confirm it from the container logs:

docker compose logs -f php-app-1 php-app-2As you refresh the page or send a few test requests with curl, both containers should show traffic.

If you want to verify health-check behavior, stop one backend temporarily:

docker compose stop php-app-2After the health check fails, Gruxi should remove that upstream from rotation and continue serving traffic through php-app-1. Start the container again and it should return to service once the health check passes.

That is the practical advantage of using a PHP load balancer with health checks instead of manually splitting traffic and hoping every backend remains available.

Monitoring upstream health and request rates

Gruxi's admin portal is useful here because it lets you observe the setup without adding a separate management stack first.

From the admin UI, you can inspect:

- current request activity and server status

- live metrics in a more user-friendly format

If you want to go further, Gruxi also supports metrics exposure for Prometheus through the /metrics endpoint on port 8001 when telemetry is enabled. That gives you a path from quick built-in visibility to a more formal monitoring setup later.

For many teams, the useful workflow is simple:

- Configure the proxy and load balancing in the admin UI.

- Confirm both PHP backends are healthy.

- Generate some traffic.

- Watch request rates and server state in the monitoring view.

- Optionally enable Prometheus scraping if you want dashboards and alerting beyond the built-in portal.

That makes Gruxi practical both as a reverse proxy for PHP services and as the first monitoring surface for the deployment.

Built-in load balancing for PHP services

If your PHP application already runs as an HTTP service, Gruxi gives you a clean way to put load balancing, health checks, and TLS termination in front of it without bringing in an additional reverse proxy product first.

That makes it a strong fit for small service clusters, internal APIs, containerized PHP applications, and early high-availability setups where you want to scale out gradually but still keep the stack understandable.

If you want to continue from here, review the Proxy docs, the metrics docs, and the built-in admin UI article.

With Gruxi, you get built-in load balancing for PHP services. Try scaling out your service, put Gruxi in front of it, and verify the upstream health and request flow from one place.